npm install axiosĬheerio is a lightweight library you can use to transverse the DOM of the HTML page downloaded using Axios for the purpose of collecting the required data. You will be using it to download pages you want to scrape data from. It is an HTTP Client, just like a browser that sends web requests and gets back a response for you. The Axios module is one of the most important web scraping libraries. Now, let start installing the Node.js packages for web scraper – don’t close Command Prompt yet. Then launch Command Prompt (MS-DOS/ command line) and navigate to the folder using the command below. After installation, the next step is to install the necessary libraries/modules for web scraping.įor this tutorial, I will advise you to create a new folder in your desktop and name it web scraping. You can also confirm by looking out for the Node.js application in your list of installed programs. If no error message is returned, then Node has been installed successfully. After installing Node.js, you can enter the below code in your command line to see if it has been installed successfully. You can install Node.js from the Node.js official website – file size is less than 20MB for Windows users.

Unlike the JavaScript runtime that comes installed in every modern browser, you will need to install Node.js for you to use it for your development. I will show you how to code a web scraper using JavaScript and some Node.js libraries. With Node.js, you can now use one language to write codes for both frontend and backend.Īs a JavaScript developer, you can develop a complete web scraper using JavaScript, and you will use Node.js to run it. This now made many developers take JavaScript as a complete language to be taken seriously – and many libraries and frameworks were developed for it to make programming backend using JavaScript easily. With Node.js, you can write codes and get them to run on PCs and servers, just like PHP, Java, and Python. Node.js is a JavaScript runtime environment built on Chrome’s V8 JavaScript Engine. However, Developers thought that JavaScript is a complete programming language and, as such, shouldn’t be confined to only the browser environment. This then means that you will need to be proficient in two languages to be able to do both frontend and backend development. For this reason, you cannot use it for backend development as you can use the likes of Python, Java, and C++. Outside of a browser, JavaScript cannot run. JavaScript was originally developed for frontend web development to add interactivity and responsiveness to web pages. In this article, I will be showing you how to develop web scrapers using JavaScript.

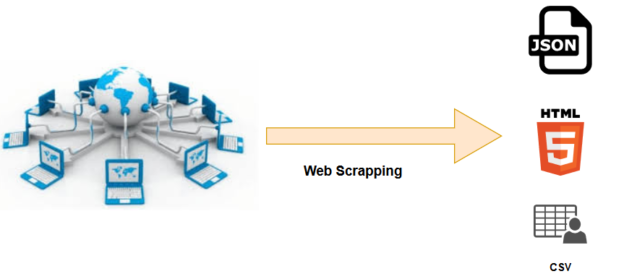

In recent times, its popularity as a language for developing web scrapers is on the rise – thanks to the availability of web scraping libraries. Being that as it may, some languages are much more popular than others as far as developing web scrapers are concerned. Java, PHP, Python, JavaScript, C/C++, and C#, among others, have been used for writing web scrapers. Web scrapers can be developed using any programming language that is Turing complete. Read more, What is Web Scraping and Is it Legal? This automated means of collecting publicly accessible data on web pages using web scrapers is known as web scraping. For this reason, researchers have to use automated means to collect these data on their own. Unfortunately, most websites do not make it easy for data scientists to collect the required data. Social and business researchers are interested in collecting data from websites that have data of interest to them. We are in an era where businesses depend largely on data, and the Internet is a huge source of data with textual data being the most important. Do you plan to scrape websites using JavaScript? With the help of the Node.js platform and its associated libraries, you can use JavaScript to develop web scrapers that can scrape data from any website you like.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed